Hooking the Future: Why AI Inference Remains Front and Center

Picture this: a factory floor that adjusts in real time to supply and demand, a medical imaging system that flags anomalies as a patient walks in, and a voice assistant that understands you with near-human accuracy. All of these rely on one thing to work at scale: artificial intelligence (ai) inference. Inference is the phase where a trained AI model is actually used to produce outcomes, make decisions, or drive actions in the real world. It’s the engine that turns theory into practice. For investors, a growing artificial intelligence (ai) inference market means not just more chips and cloud computing, but broader adoption across industries that touch everyday life.

The Shift From Training to Inference: Why It Matters for Valuation

Early AI progress centered on training—large, compute-heavy jobs that create new models. But the real growth comes when those models are used to deliver value at scale, across millions of users and devices. The AI inference market represents the practical, revenue-driving layer: real-time recommendations, automated diagnostics, autonomous navigation, and more. Analysts project that the market could grow from roughly $106 billion today to about $255 billion by 2030. That implies a compound annual growth rate in the high single to low double digits over the next decade, even as supply chains and geopolitics add complexity.

Key Drivers Powering the AI Inference Surge

Three forces are pushing demand for artificial intelligence (ai) inference higher and faster than ever:

- Hardware specialization: Accelerators and GPUs are optimized for low-latency inference, lowering per-query costs and enabling real-time analytics at scale.

- Cloud and edge convergence: Inference now runs in data centers and closer to users at the edge, reducing round-trip times and preserving privacy where needed.

- Software ecosystems: Efficient inference libraries, model compression, and managed AI services make it easier for companies to deploy AI without in-house experts.

As more companies adopt AI across verticals—finance, healthcare, manufacturing, retail, and transportation—the need for fast, reliable inference grows. This creates an enduring demand for hardware, software, and cloud services that optimize AI workloads. In turn, it helps explain why the AI inference market could hit a multi-hundred-billion-dollar size by the end of the decade.

Where Inference Is Making Real-World Differences

Across industries, the practical benefits of artificial intelligence (ai) inference are becoming visible in several high-impact areas:

- Healthcare: Real-time imaging analysis and triage support reduce misreads and speed up patient care.

- Finance: Fraud detection and risk assessment rely on rapid, scalable inference to monitor streams of data.

- Retail and customer experience: Personalization engines and demand forecasting improve conversion and inventory management.

- Manufacturing: Predictive maintenance and supply chain optimization cut downtime and costs.

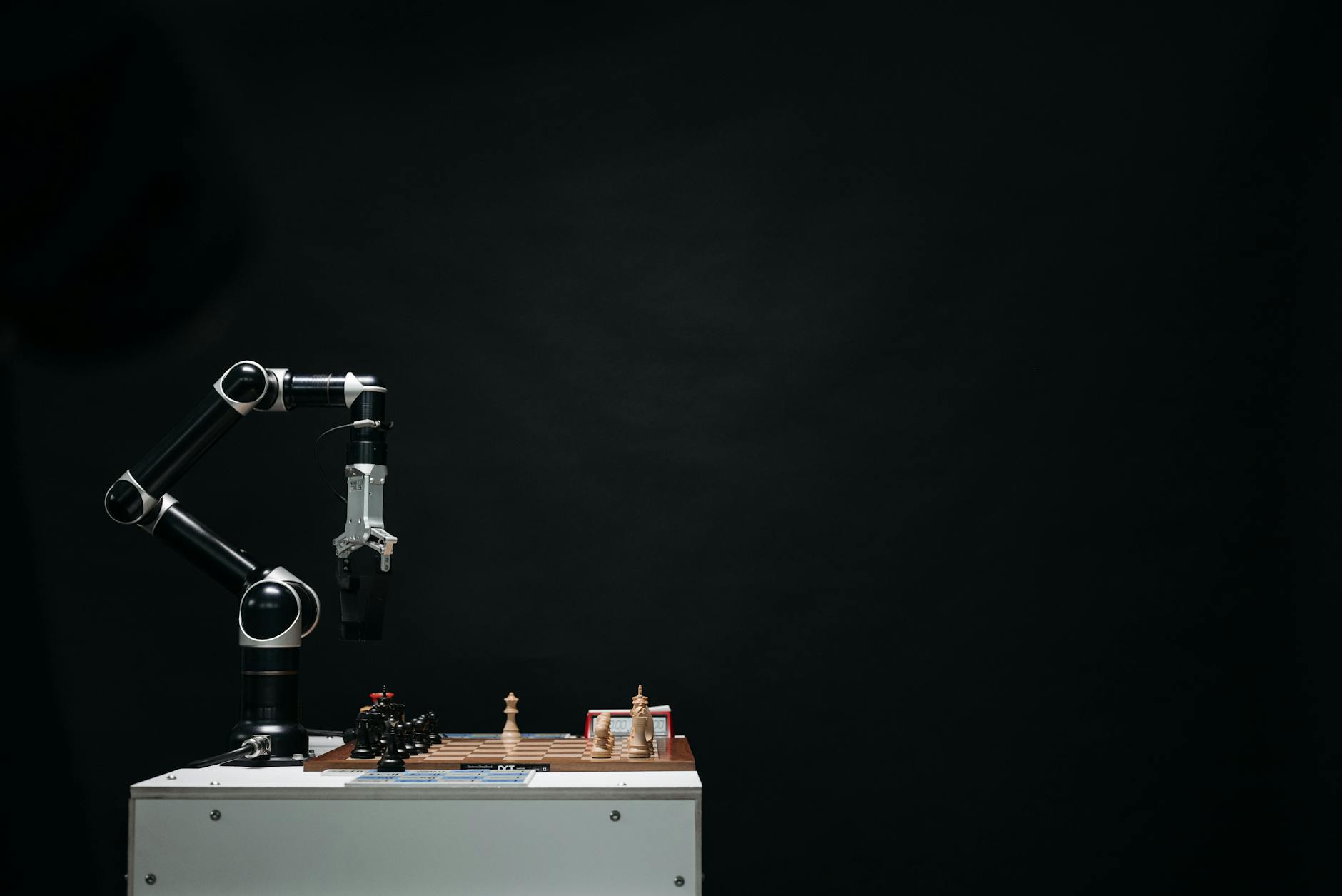

- Automotive and robotics: Autonomous and assistive systems depend on efficient inference for safety and performance.

In each case, the bottom line comes down to speed, accuracy, and cost. AI inference that runs faster, with lower energy use, and at smaller scale translates into meaningful savings and faster time-to-value for organizations adopting AI.

Three Stocks Best Positioned to Win in AI Inference

When analyzing the AI inference landscape, three U.S.-listed names consistently emerge as well-positioned to benefit from the growth in AI inference activity. These picks combine leadership in hardware acceleration, cloud services, and scalable AI workloads. Note that investing in stocks involves risk, and diversification remains essential.

NVIDIA (NVDA): The Inference Engine Cognizant of AI Workloads

NVIDIA stands out as a core enabler of AI inference through its highly optimized hardware and software stack. Its GPUs power large-scale AI deployments, and its software tools for model deployment and optimization help customers convert trained models into real-time AI applications. The company’s dominance in inference accelerators and its strong ecosystem for developers make it a natural beneficiary of a rising artificial intelligence (ai) inference cycle.

What to watch:

- Data-center revenue and the growth rate of AI-related product families.

- Advancements in inference-optimized chips and software optimization layers such as compilers and runtime libraries.

- Customer breadth across cloud providers, tech firms, and enterprise users.

Microsoft (MSFT): Cloud Scale, AI Services, and Inference as a Service

Microsoft’s Azure platform anchors a broad portfolio of AI services, including enterprise-grade inference capabilities, tools for building and deploying models, and a growing library of AI-powered, business-focused applications. As enterprises push more AI workloads into the cloud, Microsoft benefits from recurring revenue and the ability to monetize inference at scale. The synergy between Windows, Microsoft 365, and Azure AI creates a sticky customer base and robust, long-term revenue visibility.

What to watch:

- Azure AI adoption rates among Fortune 1000 firms and mid-market customers alike.

- Advances in hyperscale inference infrastructure and responsible AI tooling.

- Capital allocation that supports ongoing AI investments without sacrificing cash flow quality.

Amazon (AMZN): AI Services, Cloud, and Inference at Scale

Amazon Web Services remains a leading platform for developers deploying AI workloads. Its breadth across compute, storage, and managed AI services positions AMZN to capture a meaningful portion of the AI inference market, especially as enterprises seek end-to-end solutions from a single provider. AWS inference offerings combine scalability with a broad customer base, creating potential for steady, high-velocity demand as AI adoption accelerates.

What to watch:

- Growth in per-user AI APIs and managed inference services.

- Frictionless integration with other AWS services that streamline AI deployment.

- Competitive dynamics in cloud AI services and pricing discipline.

How to Build an Practical AI Inference Exposure

Investing in the AI inference wave doesn’t require picking only hardware stocks or only cloud players. A balanced approach can help you participate in the upside while managing risk. Here’s a practical framework you can use:

- Core exposure: Consider a leading hardware stock for inference accelerators (like NVDA) as a backbone of your tech exposure to AI inference.

- Cloud and services layer: Add a cloud platform leader (MSFT or AMZN) to capture recurring AI revenue streams tied to inference workloads.

- Diversification within AI: Include a broader tech exposure via an ETF or fund that targets AI, data infrastructure, or automation to spread risk across AI inference players with different business models.

Realistic Scenarios for 2030

Imagine a 2030 world where AI inference underpins everyday decisions across sectors. Hospitals use inference-powered triage to shorten patient wait times and guide personalized care. A factory runs its maintenance program in real time, predicting machine failures before they happen and avoiding costly downtime. A retailer uses inference to tailor promotions to millions of customers while optimizing inventory in real-time. In each scenario, the common thread is efficient, scalable inference that reduces latency, saves energy, and lowers total cost of ownership.

From an investor’s lens, these outcomes translate into durable revenue streams for the companies supplying the AI inference stack—from chips and accelerators to cloud services and managed AI tools. The result could be a multi-year period of above-market earnings growth for leaders in this space, with the AI inference theme driving earnings visibility and strategic partnerships.

Investing Safely in a Rapidly Evolving Market

AI inference is a rapidly evolving area. While the growth potential is compelling, the landscape features cycles of technological breakthroughs, competition, and regulatory considerations. Here are a few risk-management ideas:

- Valuation discipline: In a space that features headline-level growth, valuations can swing. Favor companies with clear paths to sustainable margins and durable demand for AI inference services.

- Balance sheet health: Strong cash flow and manageable debt help navigate investment cycles and price competition in hardware and services.

- Diversification: Consider blending individual stock picks with thematic ETFs that focus on AI, data infrastructure, and cloud services to spread risk.

Frequently Asked Questions

What exactly is artificial intelligence (ai) inference, and why does it matter for investors?

Inference is the phase where a trained AI model is used to generate outputs in real time. It matters to investors because it’s the stage where AI makes money—through cloud services, hardware acceleration, and enterprise AI applications—driving revenue, margins, and long-term growth potential.

Which sectors are most likely to benefit first from AI inference growth?

Healthcare, finance, manufacturing, retail, and logistics stand out as early benefactors. Each sector can deploy inference-driven solutions that reduce costs, improve accuracy, and speed decision-making, creating durable demand for AI hardware, software, and cloud services.

How should a retail investor approach exposure to AI inference winners?

Start with a core position in a major AI hardware or cloud stock, then layer in complementary exposures via ETFs or funds focused on AI and data infrastructure. Keep position sizes reasonable, and rebalance as valuations evolve and new data on growth emerges.

Are there risks to the AI inference growth story I should monitor?

Yes. Key risks include regulatory changes, supply chain disruptions for hardware, competition driving price pressure, and potential delays in customer AI adoption. Diversification and ongoing research can help manage these risks.

Conclusion: Positioning for the AI Inference Wave

The trajectory for artificial intelligence (ai) inference looks compelling through 2030 and beyond. The shift from training to inference, the expansion of cloud and edge capabilities, and the ongoing drive toward smarter, faster decision-making create a fertile environment for leading hardware, software, and cloud players. By focusing on companies with scalable AI inference offerings, strong cash flow, and resilient competitive advantages, investors can participate in a transformative trend while managing risk through diversification and disciplined portfolio construction.

Discussion