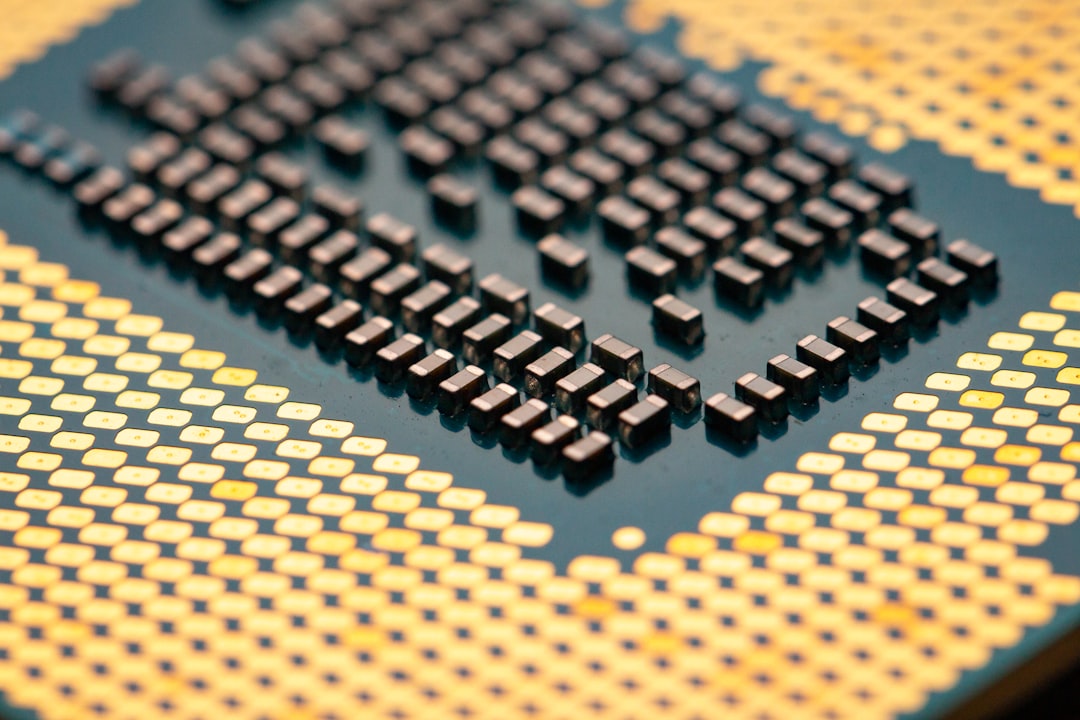

Nvidia Signals a Major Platform Pivot Focused on Inference

In a move that could reshape how Nvidia captures AI deployments, the company is quietly pursuing a new chip platform designed specifically for inference workloads. The aim is to address the growing share of AI tasks that run once models are trained and deployed in production, such as real-time recommendations, chatbots, and enterprise analytics. The industry has increasingly split AI work into training and inference, with inference becoming the dominant live-use case as organizations scale AI across operations.

Wall Street watchers are asking: this could nvidia’s next big catalyst for the stock. The company hasn’t disclosed a formal product name or exact launch date, but several people familiar with the matter say the roadmap emphasizes efficiency, lower latency, and tighter integration with software ecosystems that enterprises rely on for deployment. If true, the platform could broaden Nvidia’s reach beyond data centers to edge environments and managed cloud services where inference tasks are growing fastest.

What We Know About the Platform

Sources describe a multi-chip system that blends advanced GPUs with dedicated accelerators and a software stack tuned for inference throughput. The concept builds on Nvidia’s experience with data-center GPUs, but it’s expected to emphasize power efficiency and predictable performance in streaming workloads rather than peak training speed alone. Inference workloads typically require low-latency results and high throughput, attributes that Nvidia has signaled it will optimize through specialized interconnects and memory hierarchies.

Industry observers say the platform would be designed to support a broad range of AI tasks—from natural language processing and computer vision to anomaly detection in industrial settings. The goal would be to provide a unified solution that reduces latency, lowers total cost of ownership, and simplifies deployment across on-premises and cloud environments. This focus could help Nvidia compete more aggressively with incumbents that have leaned into inference accelerators for hyperscalers.

Analysts are eyeing several potential technical features, including enhanced tensor cores, tighter software co-design with AI frameworks, and a production roadmap aligned with the move toward smaller, more energy-efficient nodes. While the specifics remain confidential, industry chatter suggests a platform intended to scale from compact edge devices to massive data-center clusters, with a likely emphasis on reliability and security for enterprise workloads.

A Nvidia spokesperson declined to comment beyond confirming ongoing roadmap discussions, but several executives have signaled that the company intends to accelerate product diversification as AI adoption broadens. While details are still scarce, the convergence of software and hardware optimization appears to be a central theme of the evolving plan.

Why Inference Is the Focus Now

The AI market has shifted from largely training-driven growth to deployment, with inference workloads representing a fast-growing segment of utilization. Enterprises want real-time insights, personalized experiences, and automated decision-making powered by AI, and those capabilities hinge on efficient inference engines. Nvidia’s leadership in graphics processing units and AI accelerators positions the company well to monetize this demand through a holistic platform that covers both the compute and the software layers of an inference stack.

As workloads move toward inference, customers seek hardware that can deliver consistent performance at lower energy costs per operation. The new platform would need to deliver predictable results across a broad spectrum of AI models, including large language models and multimodal networks. If the platform can hit these targets, it could become a standard reference architecture for enterprises seeking a turnkey AI acceleration solution.

From a market perspective, the shift toward inference has supported rapid revenue growth in Nvidia’s data-center segment in recent years. A platform explicitly optimized for inference could extend that growth runway, particularly if it lowers operating costs for customers who already rely on Nvidia GPUs and software frameworks for AI workloads. This could create a longer tail of recurring revenue from software licenses, developer tools, and cloud partnerships.

Market Reaction and Timing

The potential move has generated cautious excitement among investors who have watched Nvidia navigate a volatile set of macro conditions, including supply-chain dynamics and shifting AI investment cycles. While no formal product rollout has been announced, market chatter has translated into stronger interest in Nvidia’s near-term trajectory and a renewed focus on the company’s roadmap in investor briefings.

Analysts say timing will be critical. If the platform is positioned for delivery within the next 12-24 months, it could serve as a tangible catalyst that complements ongoing AI demand. If delays push the timeline out, the stock might hinge more on external AI market momentum than on a single product event. In either case, the broader AI cycle and Nvidia’s execution discipline will play a central role in how investors price the concept today.

For perspective, market observers note that AI-driven hardware shortages and supply restrictions affected the sector in late 2023 and 2024, but a steady improvement in supply chains has supported a more constructive 2025-2026 environment. Within this context, a new platform aimed at inference could amplify Nvidia’s competitive advantages if it arrives with a robust ecosystem of partners, software libraries, and developer tools.

Some investors are asking if this could nvidia’s next phase to expand data center footprints and edge deployments, given the growing importance of real-time inference in sectors such as healthcare, finance, manufacturing, and retail. The potential multi-region rollout would test Nvidia’s ability to scale manufacturing, distribution, and support across global markets, a factor that typically matters to institutional buyers and long-horizon investors.

Analysts and Industry Voices

Industry analysts paint a picture of cautious optimism. A senior analyst at MarketPulse says, 'the idea of an inference-centric platform aligns well with current AI adoption trends and Nvidia’s existing scale in data centers.' The analyst adds that success hinges on software integration and a clear path to profitability for customers adopting the platform at scale.

A technology research firm notes that the move could help Nvidia diversify beyond traditional GPU licensing and capital-intensive hardware cycles. 'If the platform delivers the right mix of performance, power efficiency, and total cost savings, it could become a defining product cycle,' the firm writes in a note to clients.

A Nvidia veteran who recently spoke to investors on condition of anonymity cautions that execution will matter more than ambition. 'There’s a lot of interest in the concept, but customers will want to see a clear value proposition, a credible roadmap, and support for popular AI models out of the box,' the person said. The sentiment mirrors a broader theme in enterprise tech, where buyers prize end-to-end solutions that reduce the complexity of AI deployments.

Wall Street’s reaction will likely hinge on how Nvidia communicates its roadmap, the scale of partnerships, and the economic impact on customers who adopt the platform. If the company can demonstrate a blend of performance gains, cost efficiency, and an open ecosystem, the market could reward the stock with a renewed appetite for multi-year growth potential.

Key Data Points to Watch

- Platform focus: Inference workloads across data centers and edge environments

- Expected benefits: Lower latency, higher throughput, improved energy efficiency

- Potential launch window: Pilot programs in 2026; wider availability in 2027

- Partnership potential: Software stacks and cloud providers aligned for rapid adoption

- Impact on Nvidia’s revenue mix: Higher contribution from software and platforms, not just hardware

In the current market backdrop, investors are balancing traditional growth drivers with the prospect of new product cycles. This could nvidia’s next phase to extend leadership in AI acceleration, especially if the platform can deliver measurable returns for customers across sectors. The road ahead remains uncertainty-laden, but the strategic emphasis on inference aligns with a broader demand trend that remains resilient through cyclical shifts.

Bottom Line

As Nvidia weighs a bold move into a new chip platform tuned for inference workloads, the industry is watching whether the plan translates into a tangible product with a viable commercial model. This could nvidia’s next opportunity to transform its product stack, extend its enterprise footprint, and sustain its AI leadership in a landscape where demand for real-time, production-ready AI is rising fast. If execution meets expectations, investors could see a fresh catalyst emerge as early as late next year, with a broader rollout to follow.

Discussion