Hook: AI Is So Much More Than GPUs

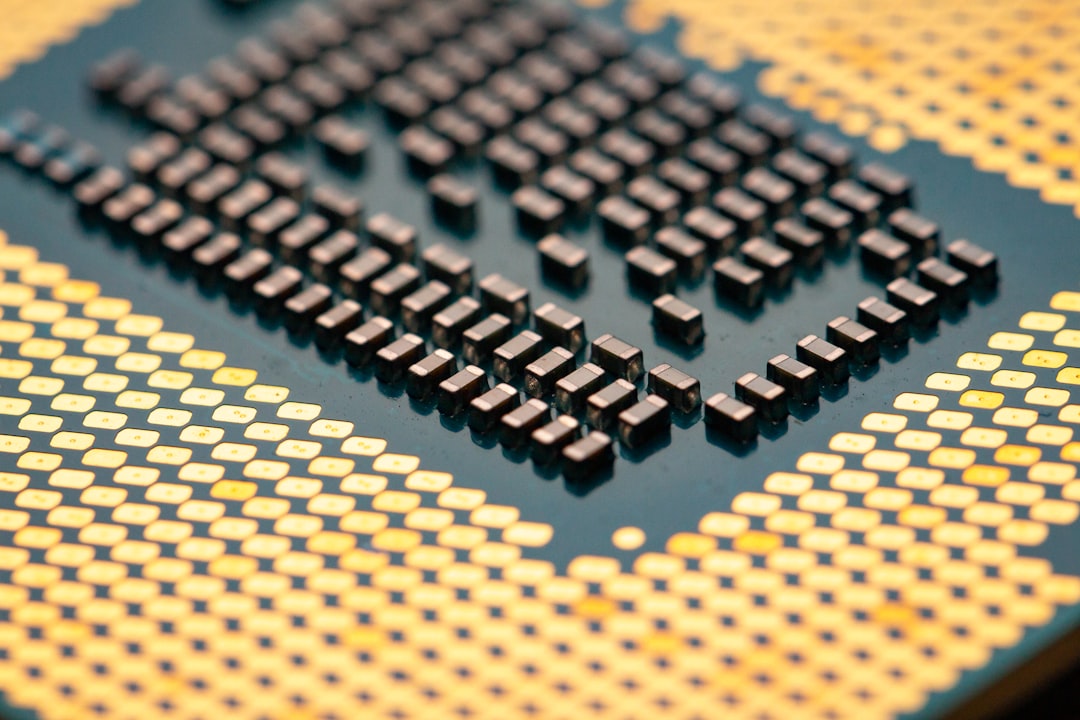

When people think about artificial intelligence, the first image that often comes to mind is a bank of powerful GPUs speeding up neural networks. But the AI boom isn’t just about raw compute. Real-world AI workloads require a complete data-center ecosystem: fast networks that shuttle data, energy-efficient power systems, smart storage, and software that orchestrates everything. In other words, the AI race hinges on the entire infrastructure stack, not just the chips at the heart of the data center.

What nvidia's networking revenue just signals for investors

In the latest quarter, the market spotlight shifted from GPUs to networking performance. The phrase nvidia's networking revenue just rose sharply, underscoring that AI data centers are driving demand across the entire supply chain. Notably, networks—switches, NICs, routers, and the associated software—are a growing piece of hyperscale operators’ budgets as they scale training and inference workloads. In practical terms, this means rising orders for Ethernet switches, data-center fabric solutions, power management systems, and cooling hardware that keep these networks humming efficiently. The takeaway for investors is clear: broad AI exposure now requires looking beyond chips to the full data-center ecosystem.

Why AI Infrastructure Goes Beyond the GPU

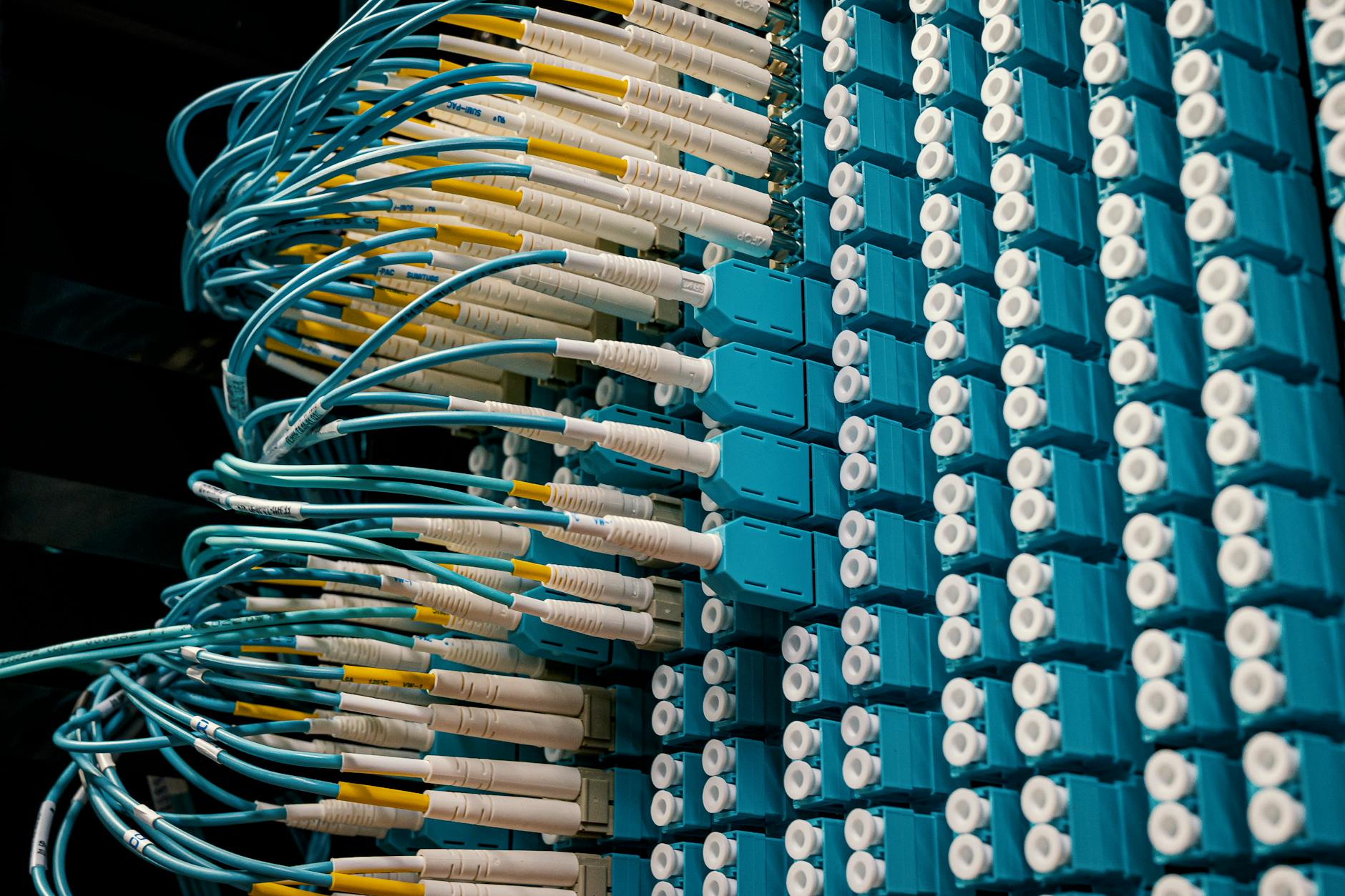

The two halves of the AI data center: compute and connectivity

AI workloads demand both high-performance computation and high-speed, reliable data transport. GPUs accelerate learning, but networks ferry the data, models, and results across racks and data centers at petabit-per-second scale in some environments. Without robust networking, even the best GPUs sit idle. This is why companies that manufacture networking gear, switches, NICs, and interconnect fabrics have become an increasingly important part of the AI backbone.

Scale, efficiency, and cost: the three levers for AI infrastructure

- Scale: Hyperscale operators need fabrics that can handle rising AI model sizes and larger training datasets.

- Efficiency: Power-efficient networking reduces operating expenses, a big factor in multi-megawatt AI data centers.

- Cost: Although compute gets pricier, networking hardware must deliver capacity without creating bottlenecks or excessive downtime.

Who Benefits Beyond NVIDIA: The Broader AI Supply Chain

The surge in AI data-center activity benefits a broad set of players across the supply chain. Here are key categories and representative examples investors might study:

- Networking hardware and software: Companies that design data-center switches, NICs, and fabric software see growing demand as networks scale to support larger models and faster inference. Name-brand players with hyperscale customers can capture durable revenue streams.

- Fabric and interconnect providers: Vendors offering high-speed interconnects and scalable fabrics help data centers move data more efficiently, reducing latency and energy use.

- Power, cooling, and energy management: Efficient power delivery and thermal systems lower total cost of ownership for AI facilities, making these suppliers attractive even when chip cycles slow.

- Software and orchestration: AI platforms require orchestration tools, security, and governance layers that sit atop hardware, creating recurring software revenue streams.

Investment Playbook: How to Capture AI Infrastructure Growth

If you’re looking to broaden exposure beyond Nvidia stock, consider a layered approach that includes networking hardware, interconnects, and data-center software. Here are practical strategies with real-world examples and numbers to guide your decisions.

Strategy A: Diversify Across the AI Supply Chain

- Networking specialists: Look for firms with a strong lineup of data-center switches, NICs, and fabrics that serve hyperscale clients. These companies often report steady backlog and multi-year expansion orders, which can translate into more predictable revenue growth than chipmakers alone.

- Interconnect and fabric providers: Companies that enable high-speed data movement at scale can benefit from AI training demand and enterprise AI deployments alike.

- Power and cooling leaders: Hyperscale AI requires efficient energy use. Suppliers with innovative cooling and power management solutions can see durable demand, even when CPU/GPU cycles fluctuate.

Strategy B: Focus on High-Quality, Large-Cap Tech Names

Large, established tech names that serve data-center customers often provide more predictable cash flow and more frequent updates on AI-related orders. For example, a company that sells network fabrics to hyperscalers might publish quarterly data showing rising average selling prices and backlog tied to multi-year infra upgrades. This kind of visibility can be attractive for long-term investors seeking steadier bets in a volatile AI market.

Strategy C: Watch for Revenue Mix Shifts, Not Just Headline Growth

Big jumps in AI-related revenue can come from one-off deals or a few large orders. The healthiest investments are those with a diversified revenue mix, including recurring software subscriptions for orchestration, security, and performance analytics. When a firm reports rising hardware sales alongside growing software attach rates, it’s a sign of durable demand across the data-center stack.

Real-World Examples: What to Watch Right Now

While no single stock is a guaranteed winner, several segments are showing resilience as AI deployments accelerate. Here are practical cues you can use when you evaluate potential investments:

- Data-center networking firms: Look for rising customer counts at hyperscale operators, improving supply-chain visibility, and expanding product lines that cover both hardware and software layers.

- Fabric and Ethernet switch leaders: Companies offering scalable, low-latency fabrics tend to benefit from the need to connect more GPUs and accelerators across larger clusters.

- Energy-efficiency innovators: Firms that reduce cooling and power usage per teraflop can improve margins as AI workloads surge, even if their stock price swings with GPU cycles.

Risks to Consider in AI Infrastructure Investing

Every growth story has potential headwinds. Here are a few you should weigh carefully:

- Cycle risk: AI hardware demand often follows model releases, training timelines, and server refresh cycles. A sudden shift in AI pricing or a slowdown in model innovation can impact orders.

- Supply chain fragility: Semiconductor shortages, component delays, and logistics hiccups can throttle revenue growth in a way that isn’t directly tied to AI demand.

- Competition and pricing pressure: A crowded field of chipmakers and networking vendors can compress gross margins if new entrants push prices or if alternative architectures gain traction.

- Regulatory and security concerns: Data-center infrastructure touches critical systems, so regulatory changes or cybersecurity incidents can affect investor sentiment and spending.

Conclusion: The AI Infrastructure Renaissance

The AI opportunity is expanding from a single chip narrative to a full-blown infrastructure revolution. The data center now requires a balanced portfolio of compute and connectivity, with energy efficiency and software orchestration playing increasingly important roles. The phrase nvidia's networking revenue just signals this broader trend: AI's backbone is being built across many layers of the data-center stack, not just on GPUs. Investors who understand the supply chain dynamics—who is selling the fabrics, switches, power systems, and software that enable AI at scale—can position themselves to benefit from sustained growth even as chip cycles fluctuate. By diversifying across the AI ecosystem and focusing on durable demand drivers, you can navigate a future where AI is as much about networking as it is about neural nets.

FAQ

What does the surge in networking revenue mean for NVIDIA specifically?

It highlights that AI deployments require end-to-end infrastructure. While GPUs drive model training and inference, the accompanying networking hardware and software are essential to move data quickly and reliably. For NVIDIA, this shift has broader implications for the company’s revenue mix and the ecosystem around its GPUs.

How can an investor gain exposure beyond GPUs to benefit from AI infrastructure growth?

Consider a diversified approach: (1) networking and interconnect leaders that supply data-center fabrics, NICs, and switches; (2) software and orchestration providers that help manage AI workloads; (3) power and cooling specialists that improve data-center efficiency. Pair these with occasional exposure to major AI chipmakers to balance the portfolio.

What are the key red flags to monitor in this space?

Watch for backlogs and long-term contracts that indicate durable demand, but beware of overreliance on a few large orders. Also monitor gross margin stability, R&D intensity, supply-chain disruptions, and regulatory developments that could affect data-center capex cycles.

Which metrics matter most when evaluating AI infrastructure stocks?

Backlog growth, billings/receipts, gross margin trajectory, and software attach rates are critical. Revenue mix by product line (hardware vs. software) and the pace of multi-year contracts can reveal whether a company is building a sustainable AI platform rather than chasing quick hardware spikes.

When should an investor expect networking revenue growth to normalize?

Industry cycles suggest peer companies typically experience several quarters of elevated orders as hyperscalers expand capacity. Normalization may occur as customers complete large, multi-year data-center overhauls, followed by a gradual return to more steadier growth tied to ongoing AI workloads and software revenue.

Discussion