Hook: A Quiet AI Breakout You Might Be Missing

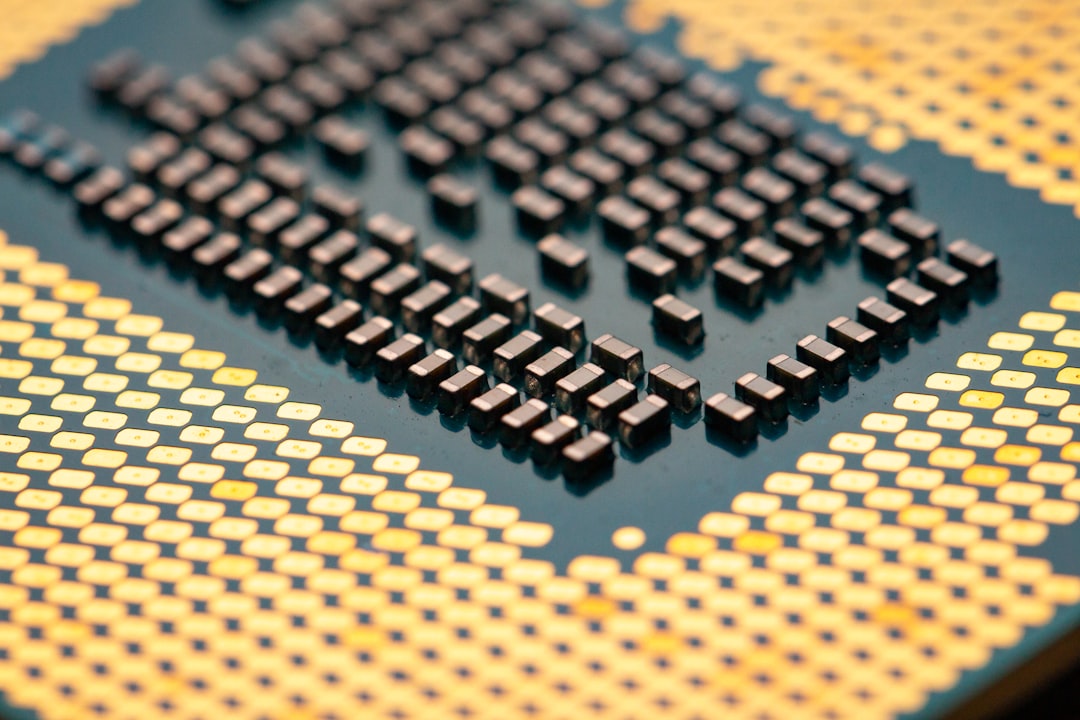

If you’ve been scanning the market for the next big AI winner, don’t overlook the quiet force behind most AI breakthroughs: memory and storage. While chips that run AI models often grab headlines, the real bottleneck often sits in the memory ecosystem—where faster memory, higher bandwidth, and smarter storage strategies are needed to feed growing AI workloads. This dynamic has turned what some call a this hidden stock year into a real-money narrative: a subset of technology stocks that can post meaningful gains even when broader markets wobble.

In 2026, many investors are waking up to the fact that AI infrastructure isn’t just about raw processing power. It’s about how quickly data can be retrieved, moved, and stored. When memory bandwidth improves and storage becomes cheaper per terabyte, AI models can run with larger context windows, bigger batch sizes, and lower latency. That’s the kind of improvement that can translate into stronger revenue per chip, higher memory-margin expansion, and, yes, stock-price re-ratings from Wall Street analysts.

Why Memory Is the Hidden Engine Behind AI Growth

AI workloads demand massive memory bandwidth. Modern transformers and large language models rely on rapid access to weights, activations, and intermediate results. The bottleneck is usually not just “how fast the CPU or GPU runs,” but how fast memory can keep up with it. Three trends are reshaping the memory-and-storage landscape right now:

- Bandwidth ramps are accelerating. High-bandwidth memory (HBM) and advanced DDR/LPDDR standards are pushing data through at rates that used to sound like sci-fi. For AI workloads, even a 20–30% improvement in memory bandwidth can meaningfully reduce training time and inference latency.

- Storage costs and speed are compressing. NVMe SSDs and fast caching layers are getting cheaper per terabyte, while new storage-class memory options promise near-DRAM latency with persistent storage. This blurs the line between memory and storage, expanding opportunities for AI data pipelines.

- Capex discipline and supply cycles matter. The memory market is capital-intensive. Companies that secure reliable supply agreements and manage capacity opportunistically can ride through cycles with stronger gross margins and steadier free cash flow.

For investors, these trends create a powerful thesis: choose companies with durable memory technology leadership, scalable manufacturing, and disciplined capital deployment. That combination can unlock a steady stream of cash flow as AI adoption expands—not just in data centers, but at the edge and in enterprise software where AI accelerates decision-making.

The Hidden Stock Year: A Case Study of a Quiet AI Memory Leader

Picture a company we’ll call NovaMem Technologies (fictional ticker NMEM for this discussion). NovaMem focuses on high-bandwidth memory modules and advanced storage solutions designed for AI inference and training. It isn’t the flashiest name on the street, but it has steadily gained traction by partnering with data-center builders, hyperscalers, and system integrators who demand reliability and scale. For this hidden stock year narrative, NovaMem offers a clean example of how memory leadership can translate into outsized stock performance and analyst optimism.

Key attributes behind NovaMem’s appeal include:

- Product leadership. NovaMem’s latest generation of HBM-style modules and DDR-based high-speed memory targets AI workloads with larger bandwidth per watt, enabling more efficient servers and accelerators.

- Growing end-market exposure. The company serves cloud providers, autonomous systems developers, and enterprise AI deployments, giving it a broad base beyond consumer devices.

- Financial metrics on the rise. Revenue growth has hovered in the mid-teens to low-20s percentage range year over year, with improving gross margins as the mix shifts toward higher-value memory solutions. Free cash flow is turning positive after several years of heavy reinvestment.

- Analyst sentiment improving. In early 2026, Wall Street began signaling higher expectations for NovaMem as AI demand thickens. A wave of price-target revisions and boosted earnings estimates followed, with some firms suggesting a path toward a $500+ target for the stock under favorable conditions.

In practical terms, the market often assigns value to the capability of a memory specialist to scale with AI. NovaMem’s strategic partnerships, disciplined capex, and demonstrated margins provide a narrative that can resonate with investors who want exposure to AI growth without piling into the more crowded hyperscaler equities. This is a classic example of this hidden stock year dynamics: a technical niche becoming a mainstream marketable theme as AI demands compound year after year.

How to Evaluate Opportunities Like NovaMem in Your Portfolio

Investors who want exposure to AI memory themes should build a framework that blends fundamentals with practical risk checks. Here are steps you can use to assess this hidden stock year opportunities without overpaying.

- Assess the addressable market. Estimate the total addressable memory market for AI workloads over the next 3–5 years. Consider memory types (HBM, DDR, NAND), end-markets (cloud, edge, automotive, healthcare), and the likely mix shift toward higher-value modules.

- Analyze gross margins and capex intensity. Companies with expanding gross margins and sustainable capital expenditure plans tend to weather AI cycles better. Watch the margin trajectory as product mix shifts toward premium memory solutions.

- Review balance sheet and cash flow quality. A healthy balance sheet with low debt and positive free cash flow is a big plus when AI cycles are volatile. If a company is still reinvesting heavily, calculate how long it might take to reach cash-flow scale.

- Check customer concentration and supply risk. A heavily dependent set of customers or exposure to a single memory foundry can heighten risk. Diversified customer bases and multi-sourcing reduce supply-chain risk.

- Evaluate the growth catalysts. New memory architectures, faster interconnects, or software that unlocks AI efficiency can serve as catalysts for revenue growth and margin expansion.

- Consider valuation context. Compare EV/EBITDA and price-to-earnings multiples against peers with similar tech risk and AI exposure. If a stock has recently jumped on sentiment, test if the premium is justified by fundamentals and growth trajectory.

- Plan an exit strategy. Set price targets with a documented path to risk-adjusted returns. If a stock spikes on hype, determine a tactical plan to trim exposure or rebalance into higher-conviction ideas.

- Use scenario analysis. Run base-case, bull-case, and bear-case scenarios to gauge how sensitive earnings are to memory-cycle swings, capex costs, and AI adoption speed.

Applying this framework to the idea of this hidden stock year ensures you don’t chase name-brand AI plays at aren’t-justified valuations. The emphasis is on the memory-and-storage backbone of AI infrastructure, which tends to be steadier than the public perception of AI software hype while offering meaningful upside when the cycle turns favorable.

What Wall Street IsSeeing: Price Targets and Growth Scenarios

Analysts have started to assign greater importance to memory-centric AI plays. In the NovaMem example, a wave of price-target revisions followed solid quarterly results and improving guidance. A raised target to around the $500 level reflects a multi-year view where AI adoption expands beyond hyperscalers to smaller data-center deployments and edge devices. While every stock carries risk, the catalyst here is clear: as AI models scale, memory systems must scale faster, and that demand translates into tangible revenue and margin opportunities for the leading memory manufacturers and their ecosystem partners.

Real-World Considerations: Risks You Should Not Ignore

Even in a strong AI memory thesis, risks abound. Here are the main headwinds to monitor if you’re considering a position in this space:

- Cycle risk. The memory market is cyclical. Demand can swing with memory-price dynamics, supplier capacity, and AI deployment cycles. Investors should be prepared for quarterly volatility, not a straight line up.

- Competition and pricing pressure. A few key players can drive price competition, compress margins, or push customers toward more integrated solutions. Differentiation through performance and reliability matters.

- Geopolitical and supply-chain risk. Global semiconductor supply chains are sensitive to policy shifts, trade restrictions, and regional capacity constraints. Diversified manufacturing and robust supplier relationships help mitigate surprises.

- Technology risk. While memory tech improves rapidly, a slower-than-expected AI adoption or a shift to alternative memory architectures could alter the growth trajectory.

For investors, the takeaway is simple: treat this as a strategic allocation to AI infrastructure layers, not a speculative bet on a single hype-driven stock. That distinction matters when you size the position and position-adjust on new data releases, capex updates, or competitive shifts.

Thematic And Macro Considerations: What Could Move This Story Forward

The AI revolution is not a single event; it’s a multi-year shift in how businesses operate, how data is stored and moved, and how decisions are automated. Several macro themes support the memory-and-storage thesis in this this hidden stock year narrative:

- AI training and inference scale. As models grow from hundreds of billions to trillions of parameters, the demand for high-speed memory and persistent storage increases, supporting revenue growth for memory ecosystems.

- Edge AI and latency requirements. Edge deployments require memory solutions that deliver fast access with low power. Companies that can provide compact, high-performance memory for edge devices may see expanding demand beyond data centers.

- Capital discipline in semiconductors. In a capital-intensive industry, firms that optimize capex without sacrificing innovation tend to outpace peers over the long run.

- Inflation and input costs. Memory components are commodity-like in some cycles. Effective procurement and supplier diversification help stabilize margins during inflationary periods.

Investors who think in this broader frame understand why this hidden stock year thesis can survive short-term pullbacks. The value isn’t just in a single quarterly beat; it’s about the durability of AI-driven demand for memory and storage, and the ability of trusted suppliers to translate that demand into earnings power over several years.

Conclusion: Why This Hidden Stock Year Matters to Long-Term Investors

The AI wave is not a one-quarter story. It’s an ongoing, multi-year cycle that depends on memory bandwidth, storage density, and the efficiency of data pipelines. This hidden stock year concept helps investors look beyond flashy AI platforms and focus on the backbone that makes AI possible. By identifying memory-and-storage leaders with durable margins, sensible capital practices, and secure supply chains, you can participate in the upside while managing risk. The case study of NovaMem illustrates a practical blueprint: a company with product leadership, expanding end-market exposure, improving free cash flow, and supportive analyst coverage can become a meaningful piece of a diversified AI portfolio.

Remember, a thoughtful approach to this space combines quantitative diligence with qualitative judgment. Monitor earnings power, keep an eye on AI adoption trends, and stay mindful of the cycle dynamics that characterize this industry. If you do, this hidden stock year can become a core part of a robust, long-term strategy rather than a fleeting rumor in a crowded market.

Frequently Asked Questions

Q1: What makes a stock qualify as part of this hidden stock year theme?

A1: Stocks in this theme typically sit in the memory and storage layer of AI infrastructure. They tend to benefit from rising AI workloads, have durable margins as they shift toward higher-value memory products, and show improving or stable cash flow. They’re not the most famous AI names, but they offer a reliable link to AI growth through hardware and systems capability.

Q2: How should I size exposure to AI memory plays in a portfolio?

A2: Start small—about 1–2% of your equity sleeve for a single idea. If the thesis proves solid over two to four quarters, you can scale to 3–5% with tighter risk controls. Use stop-loss levels aligned with your overall risk tolerance and portfolio diversification strategy.

Q3: What are the biggest risks to this space right now?

A3: The main risks are cyclical demand swings, price competition among memory suppliers, supply-chain disruptions, and shifts in AI deployment pace. Maintaining a balanced view and avoiding overconcentration helps protect against unexpected shifts in the market.

Q4: Should I favor large-cap or small-mid cap names in this theme?

A4: A blended approach often works best. Large-cap players may offer stability and broad exposure to memory ecosystems, while small-to-mid-cap names can provide higher-growth upside if they have unique IP, strategic partnerships, or robust pipeline contracts. Balance is key.

Discussion