Trump’s Bold Claim Sparks Investor Debate

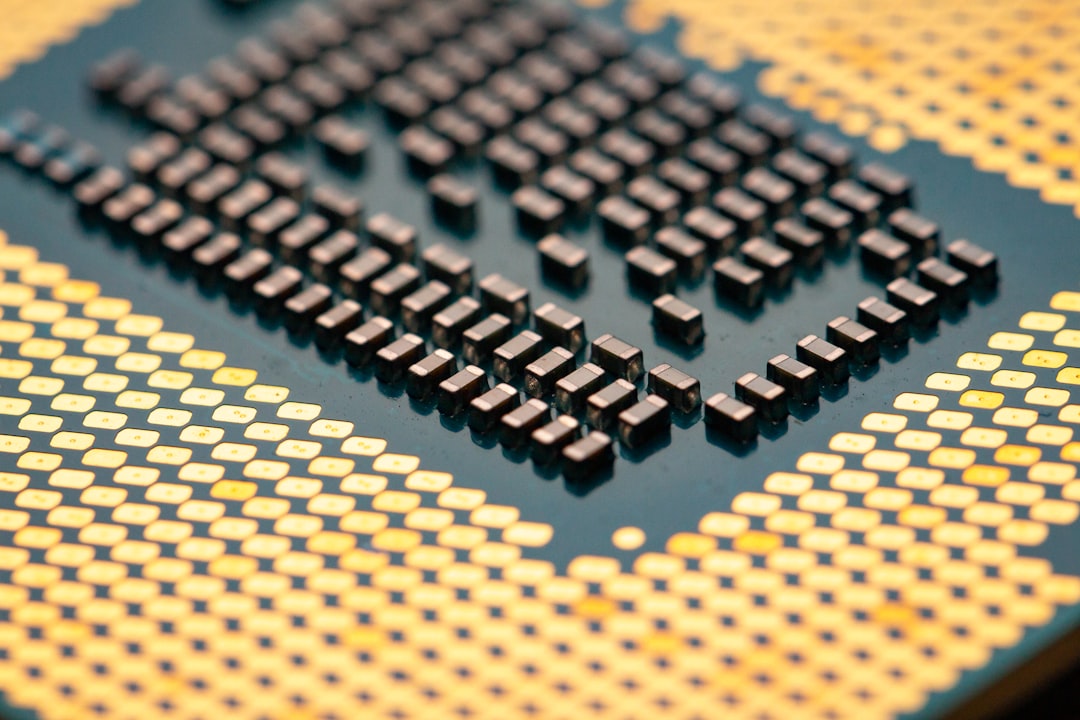

In a high-stakes moment for technology and energy markets, former President Donald Trump asserted that AI data centers should shoulder their own power costs. The comment, delivered during a nationally televised address, quickly became a talking point for investors watching how AI infrastructure will be financed in a world hungry for faster processing and cheaper, cleaner energy. The administration and industry observers stressed that the claim was meant as a policy signal, but its practicality remains under close scrutiny.

Market participants wasted little time parsing the potential implications for cloud providers, chipmakers, and energy suppliers. If data centers must cover their own electricity, some expect a shift in capex planning, contracting, and even location strategy. Traders and fund managers asked whether the plan could accelerate investments in on-site generation, microgrids, and long-term power purchase agreements, or if it would simply push costs onto service levels and pricing.

As of Feb. 25, 2026, investors are balancing a mix of optimism about AI growth with concerns about electricity prices, grid reliability, and the regulatory backdrop. The focus keyword 'trump says will fair' has already circulated across social feeds as a shorthand for a broader debate about who pays for the energy that fuels AI’s next wave of productivity gains.

What the President Said and Why It Matters

The core message, delivered in a moment that underscored the national focus on AI, was straightforward: large tech firms building AI data centers should fund their own power consumption rather than rely on subsidized or grid-backed energy. In remarks that touched on resilience and national competitiveness, the former president framed power costs as a competitive lever that could influence where new data-center capacity plants are located and how quickly they scale up.

Supporters say the approach could spur innovation in energy sourcing, including on-site generation, battery storage, and advanced cooling. Critics counter that the economics of AI workloads—where demand can spike or stall with model updates and regulatory changes—do not align neatly with a self-funded energy model. The practical concerns span capital spend, debt service, and the risk of stranded assets if energy markets shift faster than computing needs.

Analysts emphasize that even if data centers guarantee their own power, grid operators and policymakers still control the broader energy mix. The plan does not eliminate energy costs; it changes who bears them and how pricing is structured across service tiers, redundancy requirements, and uptime guarantees.

Energy Economics 101: Why this Isn’t a Simple Equation

Experts point to several reasons why the idea of data centers paying for all electricity is more complicated in practice than it sounds in a campaign-stage pitch.

- On-site and microgrid costs: Building solar arrays, diesel or natural gas backup generation, and battery storage can reduce exposure to wholesale price spikes, but upfront capital costs and maintenance add a new layer of expense that must be amortized over years.

- Cooling needs and efficiency: AI workloads generate intense heat; even with cooling innovations, power demand remains a dominant cost, particularly in regions with hot climates or aging grids.

- Grid reliability and resilience: Power outages and brownouts carry a heavy price for AI operators. Self-generation can improve resilience, but it also introduces complexity in balancing local systems with a larger electrical network.

- PPA and commodity markets: Long-term power purchase agreements could lock in prices, yet they also expose operators to commodity swings in fuel and carbon costs, depending on contract structure and policy shifts.

- Regulatory and policy risk: Tax incentives, credits, and new energy laws shape the economics of on-site generation and energy storage. A policy environment that changes faster than hardware cycles can alter expected returns.

In other words, the concept is not simply about avoiding grid bills; it’s about reshaping the entire cost stack of an AI data center—from siting and design to energy hedging and maintenance. The phrase 'trump says will fair' has become shorthand in some circles for a philosophy that energy costs should be allocated directly to the users of AI services, but the practical mechanics remain unsettled.

What Investors Are Watching Next

Investors in data-center REITs, cloud providers, and energy tech companies are scanning multiple indicators to gauge whether the policy idea could translate into real costs being shaved—or shifted—over the next several quarters.

- Electricity price trends: Industrial electricity rates and wholesale fuel costs continue to move with regional demand and the pace of decarbonization. Analysts note that even if individual data centers self-generate, wholesale energy markets will still influence long-term economics through hedges and capex decisions.

- Capital expenditure cycles: If providers pursue on-site generation, the industry could see a wave of capital deployment in solar, storage, and microgrids. Investors will look at payback periods, debt capacity, and the impact on free cash flow.

- Location strategy: Regions offering favorable energy costs and supportive policy frameworks may attract new builds, while others may lag if grid reliability or permitting slows project timelines.

- Technology risk: Advances in AI efficiency could alter energy intensity per unit of computation. A drop in watts per AI operation would mitigate energy costs even as demand grows.

- Policy catalysts: Any legislative moves on incentives for on-site generation, carbon pricing, or grid upgrades could accelerate or reshape the economics of the plan.

Traders have said that the market is watching for more concrete details on how data centers would hedge energy risk and how policymakers intend to balance reliability, cost, and climate goals. The line that has been echoed across trading desks—'trump says will fair'—has become a shorthand for a broader expectation that energy cost allocation will be a central theme in AI infrastructure strategy for years to come.

The Investor Playbook: How to Position Now

For investors, the evolving debate offers several angles. The AI boom remains a structural growth pillar, but the energy dimension adds a layer of complexity that could affect profitability and cash flow timing. Here are some practical considerations for portfolios:

- Diversify across developers, operators, and utilities: Exposure to data-center operators, energy storage manufacturers, and regional utilities can capture different aspects of the energy transition around AI demand.

- Assess balance sheets for energy-heavy assets: Companies financing on-site generation may carry higher debt but could offer hedging advantages if energy markets remain volatile.

- Monitor policy risk and grid investment: Regions that accelerate grid upgrades and permitting processes may become more attractive for new builds, influencing where capital flows go.

- Watch for earnings signals: If operators begin to pass energy costs into service pricing or tiered offerings, margin dynamics could shift in the short term.

As the market absorbs the commentary surrounding 'trump says will fair' and translates it into concrete policy detail, investors should expect a period of debate, pilot projects, and measured experimentation rather than an overnight transformation. The energy puzzle for AI infrastructure is intricate, and any meaningful changes will unfold over multiple quarters as data centers adapt to new financial realities.

Data at a Glance

- Estimated share of national electricity used by AI data centers: commonly cited figures range from 1% to 2% of total electricity demand, depending on region and density of facilities.

- Industrial electricity price range: about 6 to 9 cents per kWh nationally, with regional variations influenced by fuel mix and demand patterns.

- Capital expenditure outlook: multi-billion-dollar investments anticipated in on-site generation, storage, and microgrid deployments over the next 2–5 years.

- Policy backdrop: potential incentives for on-site generation and storage could alter economics, while grid modernization programs may reduce resilience risk and connect costs.

Bottom Line

The claim that AI data centers will fund their own power flips a long-standing industry assumption on its head, but it is not a magic wand for cheaper electricity. Energy economics, capital planning, and grid policy will shape how—or if—the idea is realized. For now, the market will keep weighing the potential benefits against the real-world costs of reliability, maintenance, and energy hedging. The conversation around power, AI, and investment is only getting louder, and the next few earnings cycles could reveal how seriously this proposal is being taken by operators and policymakers alike.

Discussion