A security slip by a software tester exposed a startling vulnerability in a popular line of AI-enabled home vacuums, putting thousands of devices at risk of surveillance. By reverse-engineering how the vacuum talks to its cloud, the tester retrieved a token meant to prove ownership. Instead, the system cataloged the tester as the owner of nearly seven thousand devices across twenty-four countries. The episode underscores a widening concern: the rapid spread of AI-powered home gear can outpace privacy safeguards.

During testing, investigators noted that the tester had accidentally gained access thousands of devices while probing the token-based access controls. The situation was first reported by technology outlets and has since drawn attention from consumer privacy advocates, cybersecurity researchers, and policymakers alike. While the tester disclosed the flaw responsibly to journalists and researchers, the breadth of the exposure raises urgent questions about who can see what inside millions of living rooms.

What Happened

The incident centers on how a cloud service verifies ownership of a given device. A token designed to confirm a user’s right to control a specific vacuum was exploited during a debugging session, exposing a pathway to countless other units. In practical terms, the tester could potentially access live video streams, microphone audio, and floor plans created by the devices’ sensors. Experts caution that even if no wrongdoing occurred in this case, the underlying vulnerability could be exploited by criminals or unscrupulous third parties if left unpatched.

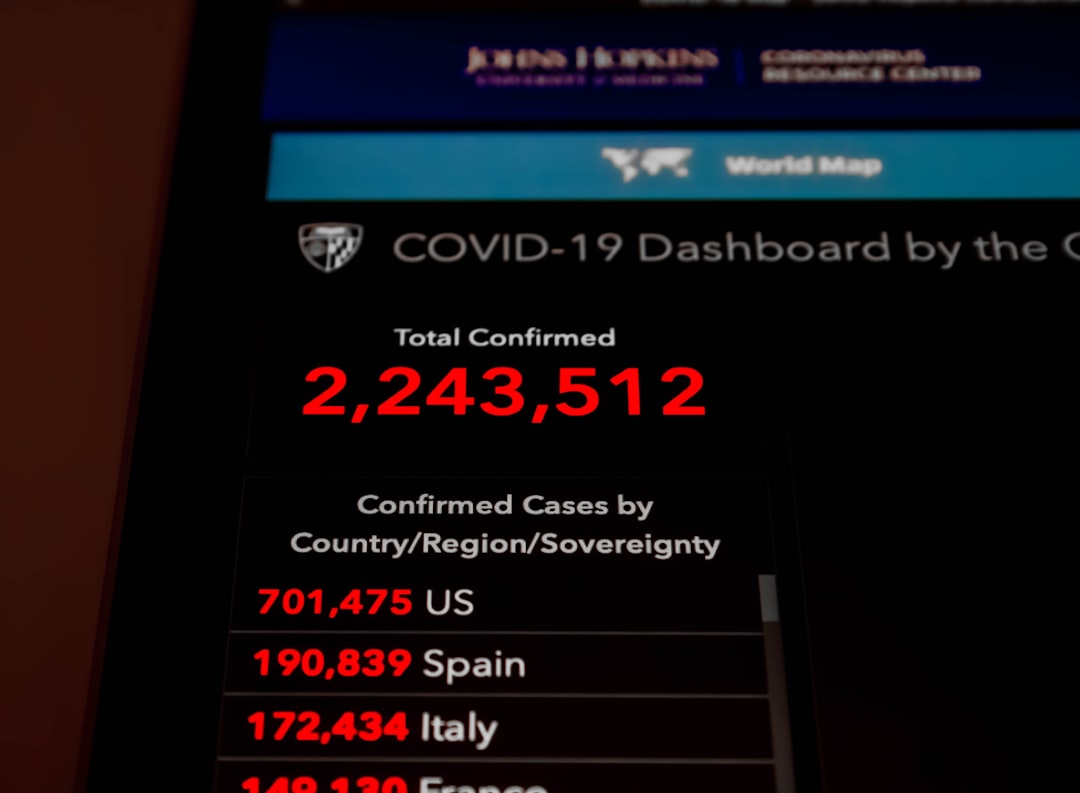

- Scope: roughly seven thousand devices spanning 24 countries were implicated by the testing session.

- Impact: the doors to live feeds, audio capture, and mapping data could be opened if attackers obtained similar credentials.

- Response: the tester reported the bug to tech outlets and is cooperating with researchers to help patch the flaw.

Industry insiders say this kind of flaw is not isolated. As more devices connect to the internet and share more data, a single token mishap can cascade into widespread exposure. The phrase accidentally gained access thousands has already begun circulating in security forums as a shorthand for a problem that could become increasingly common if not addressed quickly.

Why This Matters for American Homes

The event arrives as households lean into automation—robotic helpers, smart lighting, and voice assistants becoming standard. A 2020 study from Parks Associates estimated that about 54 million U.S. households had at least one smart home device; adoption has accelerated since, especially in markets with high-speed internet and rising home-tech budgets. The current moment sees a flood of new devices that promise convenience but bring a broader surface for data collection and potential misuse.

Privacy advocates warn that the risk goes beyond a single product line. When a home’s devices share feeds and maps with cloud servers, even unintended access can reveal intimate details about daily routines, living spaces, and patterns of movement. The exposure is not purely hypothetical: security researchers have connected similar concerns to cloud storage of sensitive footage and the potential for misused metadata to piece together a private life from multiple devices in a single home.

Regulatory and Industry Signals

Regulators and industry groups are increasingly alert to cyber risk in consumer devices. Calls for stronger default security, rapid firmware updates, and clearer data-use disclosures are growing louder as more families bring AI into bedrooms, kitchens, and living rooms. The current discourse includes debates over how to enforce end-to-end encryption for device communications, how to credential-share securely across family members, and how to reduce the risk of large-scale data exposure when a token or key is compromised.

- Security posture: experts emphasize the need for hardware-bound tokens, frequent software patches, and robust anomaly detection in cloud services.

- Consumer protections: policymakers are weighing requirements for transparent data retention policies and clear opt-in controls for video and audio data collected by smart devices.

What Consumers Can Do Now

While manufacturers address the vulnerability, consumers can take steps to reduce risk in the near term. Security researchers advise strengthening home networks and minimizing data exposure by adjusting device settings and network architecture.

- Update firmware and app software regularly to ensure the latest security patches are applied.

- Enable two-factor authentication where available and disable remote access when not needed.

- Create a separate smart-home network or VLAN for IoT devices to limit cross-network access.

- Review permissions for cameras and mics, and disable features that aren’t essential.

- Pay attention to data policies and where footage or maps are stored, including whether cloud storage is encrypted and who can access it.

Analysts say the situation highlights the need for industry-wide standards around token handling, device provisioning, and secure cloud communication. If manufacturers move faster on security hardening, consumer confidence could recover even as the market for home robotics expands in a volatile, high-growth environment.

Looking Ahead: The Road to Safer AI Homes

As AI-driven home helpers proliferate, the risk calculus for households will hinge on a balance between convenience and privacy. The incident underscores a broader truth: when devices become portals to private space, even small mistakes can scale into large exposures. Security experts warn that accidental vulnerabilities in one product line can become momentum for a wider push toward stringent security standards across the consumer-tech ecosystem.

Industry leaders insist that consumer safety remains a top priority. They point to ongoing investments in hardware and software protections, better authentication methods, and more transparent governance of data generated inside homes. For families, the takeaway is clear: treat AI-enabled devices as part of a broader security strategy, not as isolated gadgets that can be left unprotected.

In the short term, the incident will likely accelerate discussions among lawmakers about privacy protections for connected devices and the standards by which vendors must test and publish security updates. For now, the focus remains on patching the vulnerability, limiting exposure, and giving households the tools to safeguard their own spaces against a landscape where AI-powered devices are becoming as common as toasters, yet far more capable of capturing detail.

As the story evolves, security researchers warn that the pattern of accidental exposure could recur if the industry does not align on robust, scalable safeguards. The phrase accidentally gained access thousands may fade into a cautionary footnote, but its meaning will linger: in a world of connected homes, one token is, quite literally, a doorway.

Bottom line for investors and consumers: the AI home boom is real, but it must be matched with real security. If manufacturers act decisively, this week’s breach may become a turning point toward safer, smarter homes instead of a new frontier for privacy anxiety.

Discussion