The Modern Clearing Challenge

As of March 2026, a growing network of AI agents negotiates and settles trillions of dollars in real time across industries from finance to logistics. This is not a single warehouse where checks are cleared; it is a sprawling digital web where autonomous systems negotiate, verify, and execute transactions with minimal human oversight. The question on everyone’s mind: can this distributed system stay stable without a traditional referee guiding every move?

The answer hinges less on arithmetic and more on architecture. Banks and nonbanks alike have built the capacity to compute balances, track debits and credits, and reconcile ledgers. What remains stubborn is the social contract that holds a network of rival actors together when there is no single authority with enforcement power over all participants.

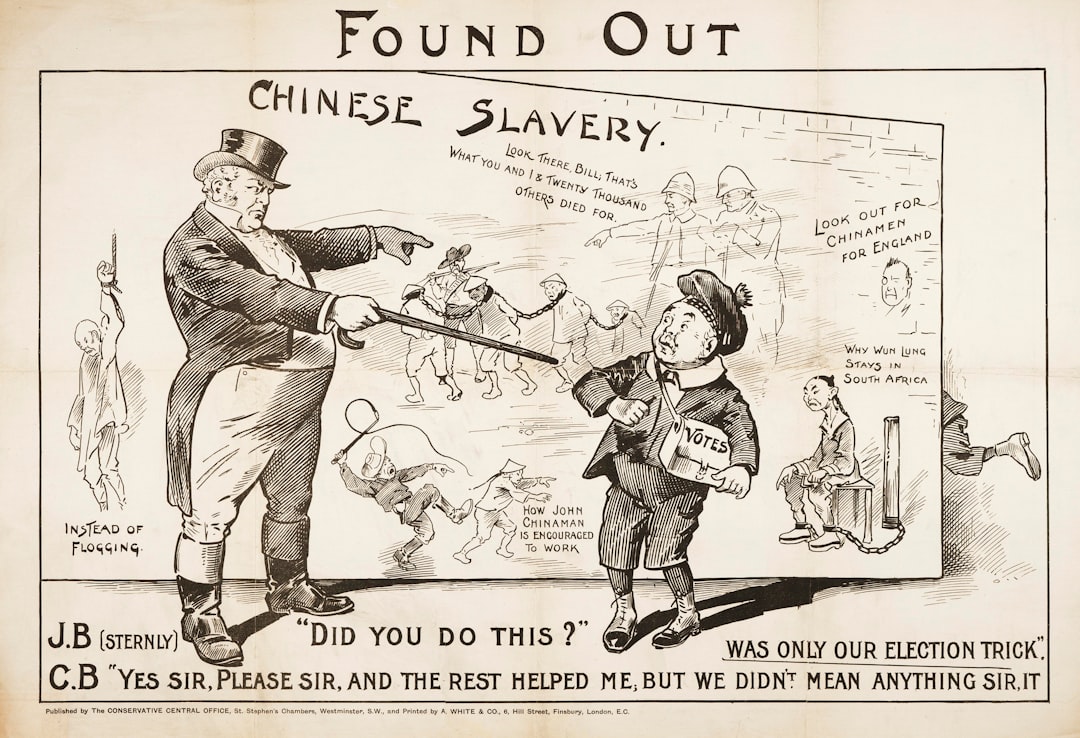

History offers a blunt but valuable reminder. In the 19th century, London’s bankers faced a problem far larger than any single balance sheet. They needed a way for dozens of institutions to honor daily settlements with trust, not coercion. The London Bankers Clearing House emerged as the prototype for modern clearing: a voluntary, reputation-based system where exclusion from the network is the strongest sanction.

A Fresh Look at the 19th Century Banking Problem

The 19th century banking problem was simple to describe but brutal in consequence: how do you synchronize hundreds of independent banks so that paying one bank and settling with another doesn’t unravel a city’s payment system? The solution was not magic or heavy-handed law; it was a social contract backed by name-and-shame enforcement. Banks agreed to clear only through a shared system, to verify each other’s settlements, and to punish violators by cutting off access to the network. The rest, as they say, followed—fast.

For almost a century, this reputation-driven mechanism worked because it attached identity to action. You couldn’t hide behind a veneer of anonymity if you wanted to participate in the clearing cycle. The result was a robust trust layer that underpinned the era’s finance and helped power a growing economy across continents.

The AI Era and the Reframing of Clearing

Today’s digital landscape is different in scale and speed. AI agents handle thousands of interactions every second, negotiating terms, aligning risk, and locking in settlement decisions across diverse networks. There is no single, universal arbiter with the authority to veto, audit, or fine every participant. The challenge now is to build a trust architecture robust enough to withstand strategic deviations, data privacy risks, and cascading failures that could ripple through global markets.

Experts argue that the modern equivalent of the Clearing House must rest on three pillars: transparent behavior standards, verifiable action trails, and credible consequences for rule violations. Without a clear, shared standard and a practical mechanism to enforce it, liquidity vanishes from the system and counterparties retreat to fragmented edges, weakening price discovery and increasing systemic risk.

Designing a Modern Trust Framework

Industry voices describe a blueprint that blends technical rigor with social accountability. Identity is no longer a mere label; it is a verified, auditable fingerprint tied to each AI agent’s conduct. Reputation is earned over time, reflecting both precision and integrity in settlements. Access rules specify who can transact, under what conditions, and what obligations apply when disputes arise.

- Machine-readable settlement standards coupled with tamper-evident logs

- Shared governance that can pause or remove an AI agent from the clearing loop

- Public, regulator-accessible audit trails to deter manipulation and reveal weakness

Some observers propose interoperability as a core principle. As real-time rails expand—fed by central banks’ payment networks and private clearing platforms—the ability of different systems to speak the same language becomes essential. Fragmentation would create pockets of speed and pockets of risk, undermining the confidence that sustains large-scale AI-driven settlement.

Key Data Points Shaping the Narrative

Industry trackers place real-time settlement volumes in the tens of trillions annually as AI-driven networks mature. In 2025, the global real-time payments market was estimated to exceed 60 trillion USD, with growth expected to continue as AI agents assume more decision-making responsibilities. Within the United States, adoption of real-time rails has accelerated, with banks and fintechs reporting significant increases in volume and complexity across cross-institution interactions.

Analysts caution that metrics vary by region and network, but the direction is clear: more actors are transacting in real time, more data points are generated, and the potential for misalignment grows if trust mechanisms do not scale. In pilot programs and early deployments, anomaly rates have hovered in the low basis points, suggesting that the system can run cleanly when disciplined, but the cost of a single large breach would be meaningful across multiple sectors.

For context, some numbers that frequently appear in industry briefings include: global real-time settlement volume around 60 trillion USD in 2025, with a trajectory toward higher levels by 2027; U.S. real-time rails handling a rising share of everyday payments as more institutions join the network; and anomaly rates in controlled pilots below 0.01% of transactions, a level that could still generate substantial absolute risk if scaled unexpectedly.

Lessons From History for Today’s Markets

The enduring lesson from the 19th century is that trust is both fragile and essential. An open, reputation-based system can function at scale when participants believe the rules are fair and the consequences credible. AI adds velocity and complexity, but it does not erase this foundational truth. If anything, the stakes are higher because faster settlement cycles magnify the impact of any misalignment or manipulation.

Regulators and market participants are testing hybrid models that blend private governance with public oversight. Some central banks are exploring “principles-based” AI governance, while others advocate for auditability standards that resemble traditional financial reporting but tailored for autonomous decision makers. The objective is not to domesticate AI with heavy-handed rules; it is to align incentives so that honest behavior is the path of least resistance.

What to Watch Next

Three developments will shape whether the AI-driven clearing model can stay resilient in 2026 and beyond:

- Technical interoperability across networks that operate with different data schemas, security models, and latency profiles

- Regulatory clarity on responsibility for AI-driven settlements, including how to attribute fault in cross-border cases

- Economic incentives that reward compliance and punish anti-competitive or destructive behavior without chilling innovation

As the market digests these shifts, the core question remains the same: can a complex, distributed system enforce agreements with the same efficacy as a centralized clearing house did a century and a half ago? The international financial system will be watching closely as the talk moves from theory to real-life testing and adoption.

The 19th Century Problem in a 21st Century Jacket

The phrase 19th century banking problem surfaces often in boardrooms and think tanks as they weigh AI’s promise against its risks. The parallels are striking: you still need a binding set of rules, a way to verify implementation, and credible costs for non-compliance. The difference is the scale and speed of the decision machine at the heart of modern markets. The essence remains unchanged: trust, once earned, keeps the system running fast enough to power tomorrow’s economy. Without it, even the most advanced AI can destabilize the flow of capital just as surely as a bad balance sheet once did.

So as we advance, the focus for banks, regulators, and technology providers should be clear. Build the trust infrastructure first: identity tied to action, transparent and immutable logs, and a governance framework capable of suspending or expelling a rogue AI agent. Only then will the promise of AI-driven real-time settlement translate into durable gains for households and businesses alike, reducing friction while preserving the safeguards that keep money moving when it matters most.

In the end, the 19th century banking problem may never disappear completely. It can, however, become a solvable design problem: a shared clearing architecture that blends human oversight with machine precision, anchored by reputation and reinforced by enforceable consequences. If that balance holds, the AI era will not merely replicate history; it will rewrite the terms of trust for global finance.

Discussion