Hooked on Momentum: Why This Headlines a Turning Point

When big tech news breaks, investors instinctively ask: what does this mean for profits, growth, and risk? The rumored dynamic captured by the phrase openai just became broadcom's could be one of the most consequential shifts in AI hardware for 2026. Even if the details remain in flux, the idea that OpenAI, a leading consumer of AI models and software services, would anchor Broadcom’s chip strategy points to a broader evolution in the AI infrastructure race. This article unpacks what that shift could mean for Broadcom, OpenAI, other chipmakers, and you as an investor.

Setting The Stage: AI Hardware in 2026

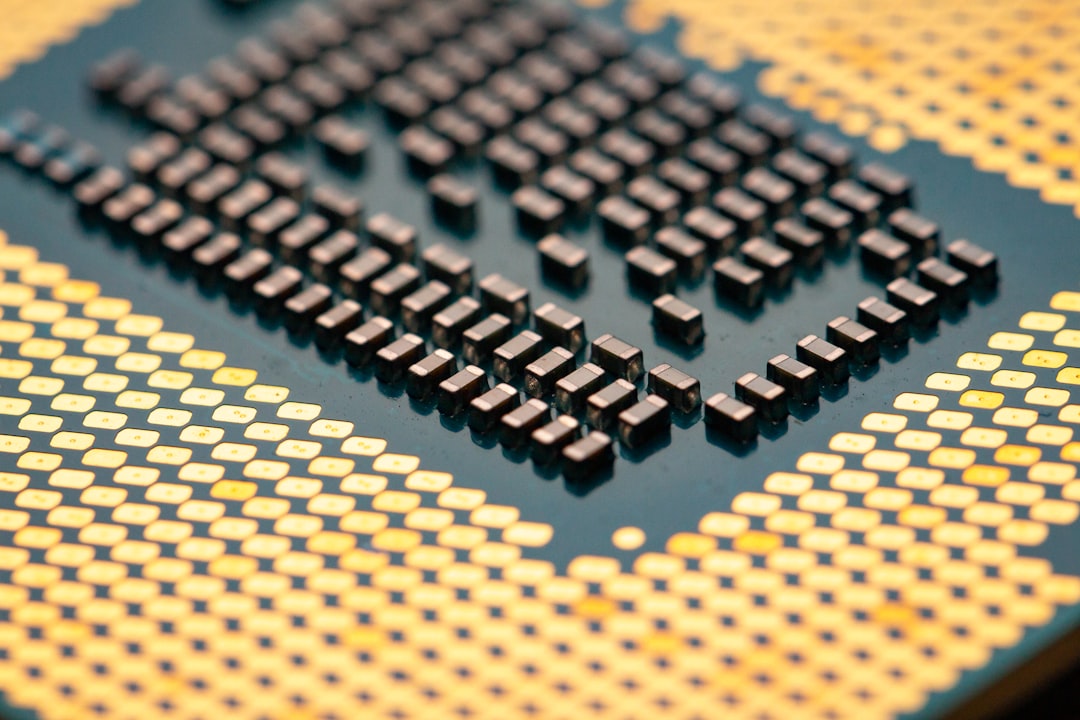

The AI hardware market has long been dominated by a single powerhouse, with Nvidia GPUs playing the public-facing role in data centers around the world. But the landscape is changing as software-centric AI players look to de-risk reliance on any single supplier and to optimize for performance, power usage, and total cost of ownership. In this environment, a naming pattern like openai just became broadcom's underscores a broader trend: the push to diversify the supply chain, secure scale, and push for custom silicon tailored to specific AI workloads.

- Nvidia remains a strong incumbent in many AI training and inference scenarios, but customers are increasingly seeking alternatives for cost and resilience.

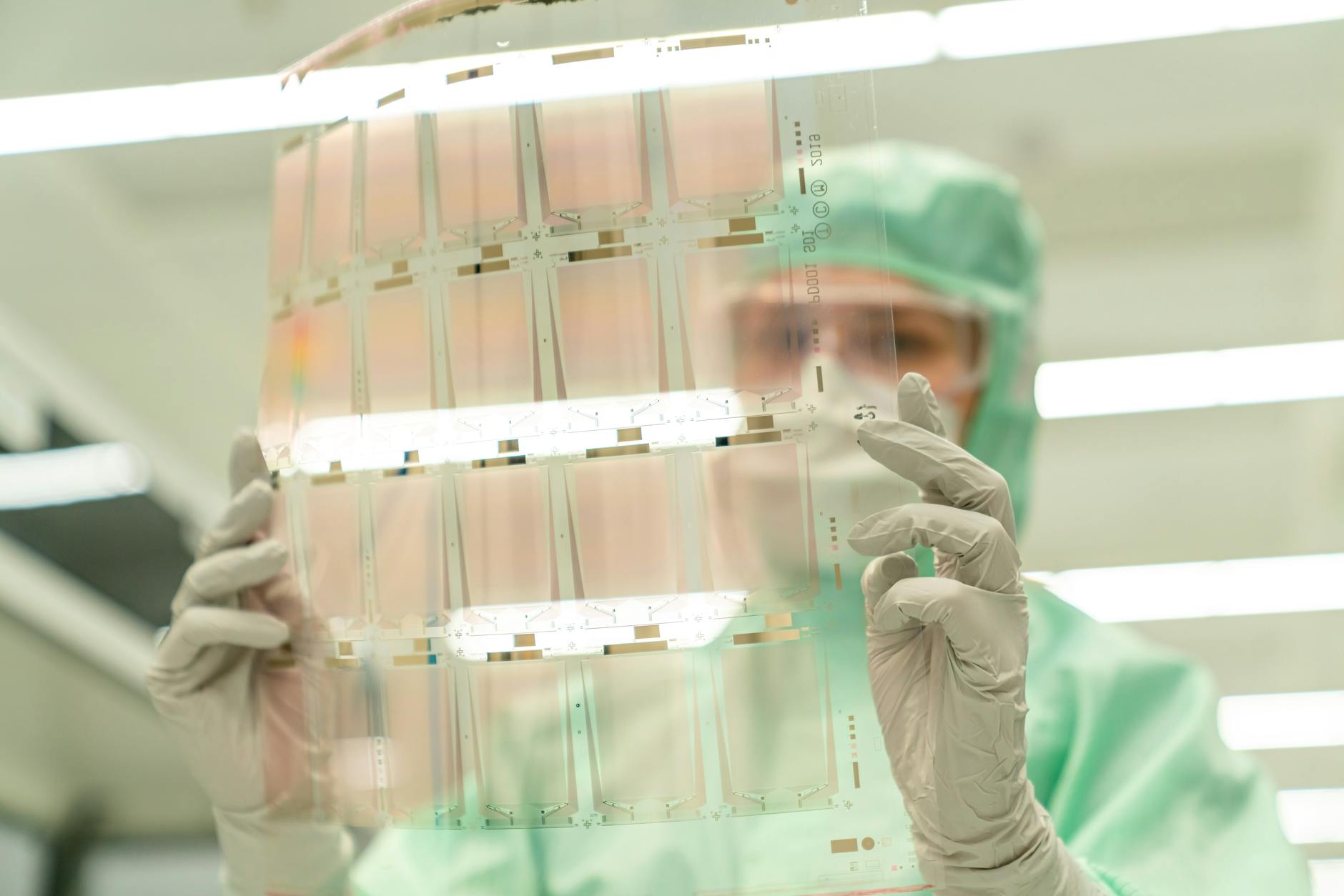

- Cloud providers and enterprise AI teams are exploring custom ASICs and NPUs designed to accelerate particular models or workloads, often in collaboration with foundries and silicon partners.

- Chipmakers are expanding beyond pure silicon to system-level acceleration, including software, firmware, and integration with AI frameworks to squeeze out more efficiency per watt.

In this context, the idea that OpenAI just became Broadcom's new chip customer would reflect a strategic move toward deeper collaboration, not merely a one-off purchase. It signals both the importance of reliable supply and the potential for tailored hardware that can unlock specific AI workloads at scale.

What Does the OpenAI-Broadcom Tie Really Imply?

Assuming the news is accurate or at least directionally true, several implications emerge for both players and the broader market:

- Revenue visibility and margins for Broadcom: A new, sizable customer for AI accelerators could provide steady, long-term revenue streams beyond Broadcom's existing portfolio. If OpenAI locks in multi-year fab and package commitments, it could help smooth revenue visibility and potentially lift margins on the relevant product lines.

- Customization and differentiation: OpenAI's scale and unique model architectures may require silicon tuned for specific operations—matrix multiplications, attention mechanisms, and inference latency targets. A Broadcom collaboration could yield chips optimized for OpenAI's workloads, with benefits that ripple into efficiency and performance gains.

- Supply chain resilience: Broadcom's breadth across networking, storage, and processing components could help OpenAI diversify its hardware stack. Fewer single-vendor dependencies translate into lower risk during supply disruptions and capacity crunches.

- Competitive signaling: If OpenAI dedicates resources to bespoke hardware, it could accelerate the shift away from purely off-the-shelf accelerators toward platform-specific accelerators, a trend that could impact Nvidia’s competitive standing in certain segments.

These implications aren’t just about one contract; they’re about a broader shift toward collaboration between AI developers and silicon providers to optimize for cost, speed, and energy efficiency at scale. The market will watch for revenue signaling, contract terms, and any accompanying moves by OpenAI to house more of its stack in-house or with other partners.

Broadcom’s Playbook: From Chips to AI-Ready Solutions

Broadcom has built a diversified business around high-performance silicon, networking, and data-center solutions. A move into AI-optimized hardware would leverage its strengths in high-volume, reliability-focused design and manufacturing, while expanding into the AI-specific tier of performance-per-watt and latency optimization. Here are the levers Broadcom could pull:

- Custom AI accelerators: Design chips tailored for OpenAI’s inference workloads, including optimized memory bandwidth, tensor operations, and on-die cache architectures that reduce latency.

- System-level integration: Pair accelerators with interconnects, PCIe, and software stacks to minimize data movement and energy usage, improving total cost of ownership for large-scale AI deployments.

- Software and firmware collaboration: Co-develop software runtimes and schedulers that maximize hardware efficiency for specific OpenAI models, potentially creating a moat around Broadcom-based platforms.

- Economies of scale: Leverage Broadcom’s manufacturing relationships and supply chain efficiency to offer cost-competitive AI accelerators at scale, a critical advantage as model sizes and data requirements rise.

For Broadcom, the strategic payoff isn’t just a one-time sale. It’s about becoming an integral part of OpenAI’s hardware backbone, with recurring revenue streams from support, software updates, and potentially new generations of chips aligned to model advancements.

What It Could Mean for OpenAI

From OpenAI’s perspective, contracting with a broad-based supplier like Broadcom offers several potential benefits and trade-offs:

- Scalability and efficiency: Custom hardware could dramatically reduce inference latency and energy per operation, translating into lower operating costs as usage scales from millions to tens of millions of queries per day.

- Supply chain resilience: A diversified supplier base reduces risk tied to a single provider’s factory shutdowns or capacity constraints—an important consideration given recent global supply-chain fluctuations.

- Control vs. dependency: A deeper hardware collaboration gives OpenAI greater control over performance characteristics, but it also increases dependency on a single partner for critical components.

Cost efficiency is a double-edged sword. While bespoke silicon can lower per-inference costs as volumes grow, initial development costs and longer ramp-up times may temper early-stage profitability. If the alliance proves durable, OpenAI could achieve a more predictable cost curve that aligns with rapid top-line growth in AI usage across enterprise and consumer segments.

Implications for Nvidia and the AI Hardware Landscape

Any movement toward deeper collaboration between OpenAI and Broadcom could ripple through the AI hardware ecosystem. Nvidia’s GPUs have been the default foundation for many AI workloads, but a shift toward bespoke accelerators could lead to a multi-vendor landscape where customers choose different chips for different layers of the AI stack. Here’s what to watch:

- Hybrid ecosystems: Expect more enterprises to deploy a mix of accelerators optimized for training versus inference, depending on model size, latency requirements, and energy budgets.

- Pricing dynamics: Competition among silicon providers could compress average selling prices (ASPs) for certain AI accelerators, while premium pricing might emerge for chips with demonstrated model-specific advantages.

- Software-integration play: Chips that ship with optimized software stacks and robust tooling could gain a leg up over pure hardware competitors, reinforcing a combined hardware-software moat.

For Nvidia, the era of single-vendor dependence for large-scale AI deployments may be giving way to a portfolio approach that lets customers optimize for their own workloads. In such a world, investors should look for signs of diversification in customers’ commitments, as well as evidence of cross-sell opportunities across the AI lifecycle—from training to inference to model management.

Investment Implications for 2026: A Practical Roadmap

If the news holds and OpenAI becomes Broadcom’s flagship AI accelerator customer, what does it mean for investors navigating 2026? Here are practical angles to consider:

- Revenue visibility and growth cadence: Long-term commitments could smooth Broadcom’s AI-related revenue, reducing quarterly volatility and strengthening earnings visibility.

- Margin trajectory: Custom silicon programs often start with heavier R&D and lower margins, but scale and learning curves can lift margins as volume grows and design wins accumulate.

- Capital expenditure cycles: AI hardware buys tend to ride a data-center capex wave. Watch for commentary on data-center expansion, cloud price cycles, and any guidance related to AI-specific product lines.

- Risk factors to monitor: Dependency on one major customer for key product lines, supply-chain disruptions, and the pace of competing AI hardware architectures.

To navigate this landscape, investors can build a framework that weighs both the upside of a strategic hardware partnership and the downside risk of concentration. Here is a simple scenario analysis to illustrate potential outcomes:

| Scenario | Annual AI Chip Revenue (Broadcom) | Gross Margin on AI Chips | Impact on 2026 EPS |

|---|---|---|---|

| Low Growth | $600 million | 28% | Small uptick vs. base case |

| Base Case | $1.2 billion | 34% | Meaningful uplift in earnings |

| High Growth | $2.1 billion | 38% | Notable revenue beat and multiple expansion |

Note: These numbers are illustrative and depend on contract terms, manufacturing efficiency, and end-market AI demand. They are intended to help frame the decision-making process rather than predict exact outcomes.

What This Means for OpenAI, Beyond the Headlines

For OpenAI, a close hardware partnership with Broadcom could accelerate product and cost optimization, but it also raises questions about flexibility and control. If OpenAI locks its AI acceleration path to Broadcom, the company gains predictable performance characteristics and potentially favorable pricing due to scale. The flip side is that any shift in strategy—such as model architecture changes or the adoption of alternate accelerators for different models—could complicate existing relationships.

From an investor perspective, the key is to monitor how this partnership complements OpenAI’s broader business plan. Are there plans to monetize hardware innovation through licensing of specialized software stacks, or to offer a family of AI-enabled services that rely on bespoke hardware? The more OpenAI can fuse software offerings with hardware advantages, the more durable the revenue model could become.

Conclusion: A 2026 Storyline in Real-Time

The phrase openai just became broadcom's resonates beyond a single contract. It signals a potential pivot in how AI developers procure hardware, favoring deeper collaborations that blend bespoke silicon with carefully integrated software ecosystems. If confirmed, this partnership could influence 2026 revenue trajectories, margin profiles, and the competitive dynamics among chipmakers. For investors, the takeaway is to watch for durability, scale, and the real-world performance of AI workloads on Broadcom-designed accelerators. A shift like this doesn’t happen in a day, but the direction—toward co-developed hardware and performance-optimized AI platforms—appears increasingly probable as the AI economy matures.

FAQ

Q1: What does it mean when OpenAI becomes a major hardware customer?

A1: It signals a long-term commitment that can stabilize Broadcom’s revenue, potentially unlock performance gains through customized silicon, and help both companies optimize for scale. It can also shift some competitive dynamics in the AI hardware market.

Q2: How could this affect Nvidia and other chipmakers?

A2: If AI developers increasingly seek multi-source, optimized hardware, Nvidia could see lower monopoly pricing power in certain segments. Competitors may gain share by offering tailored accelerators and integrated software stacks, especially for inference workloads at scale.

Q3: Should investors chase Broadcom stock on this news?

A3: Investors should assess long-term impact. Look for clarity on contract duration, the size of the opportunity, expected margins, and how this aligns with Broadcom’s overall guidance and capital expenditure plans.

Q4: What risks accompany a bespoke hardware collaboration?

A4: Key risks include customer concentration, execution risk in chip design and manufacturing, potential technology shifts in AI workloads, and the possibility of broader supply-chain disruptions impacting delivery schedules.

Discussion